December 13, 2016.

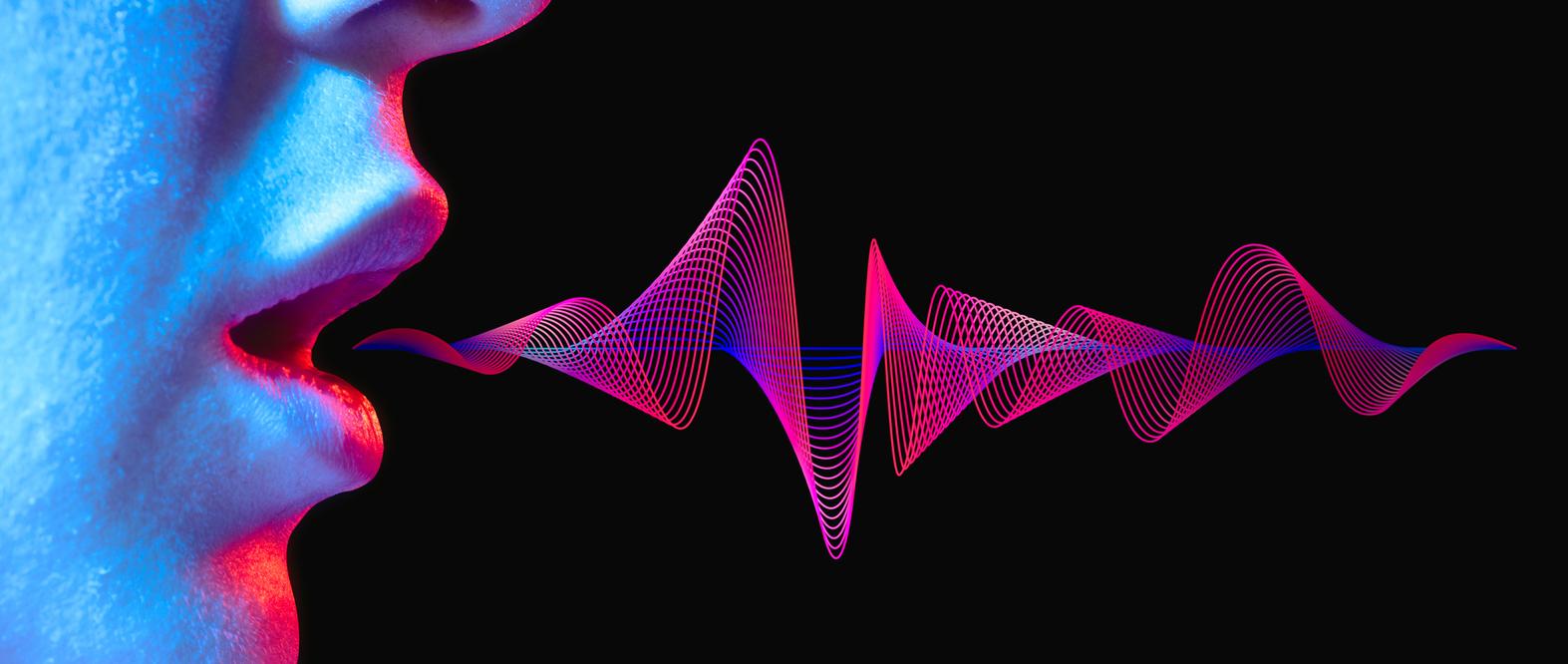

A team of researchers from the National Center for Scientific Research (CNRS) and the National Institute for Health and Medical Research (Inserm) has developed a voice synthesizer, which allows mutes to speak.

A machine learning algorithm

Researchers from CNRS and Inserm worked together on an innovative project: the manufacture of a synthesizer capable of reconstructing the speech of an articulating person, but whose voice cannot be heard. This technology is made possible by an algorithm that captures and decodes a set of signals related to the joint.

” A machine learning algorithm is used to decode these articulatory movements using sensors placed on the tongue, lips and jaw, and convert them in real time into synthetic speech. “, Specify the researchers in a press release. ” Converting a silent joint into a an intelligible speech signal has already been the subject of several works “.

Follow the movements of the articulators

The synthesizer is in fact made up of an ultrasonic sensor placed under the jaw of the mute person and a camera positioned at the level of the mouth. ” This association makes it possible to simultaneously follow the movements of the internal articulators (like the tongue) and external (like the lips) “, Add the researchers. Thanks to this system, the machine does not need to memorize vocabulary, since the algorithm only decrypts the movements.

This work is an important step, but the researchers do not intend to stop there. ” These new results are a necessary step towards an even more ambitious goal », They explain in the review PLOS Computational Biology, who published the study. In the long term, they would like to reconstitute the voice no longer thanks to the observation of the articulation, but thn taking into account only brain activity.