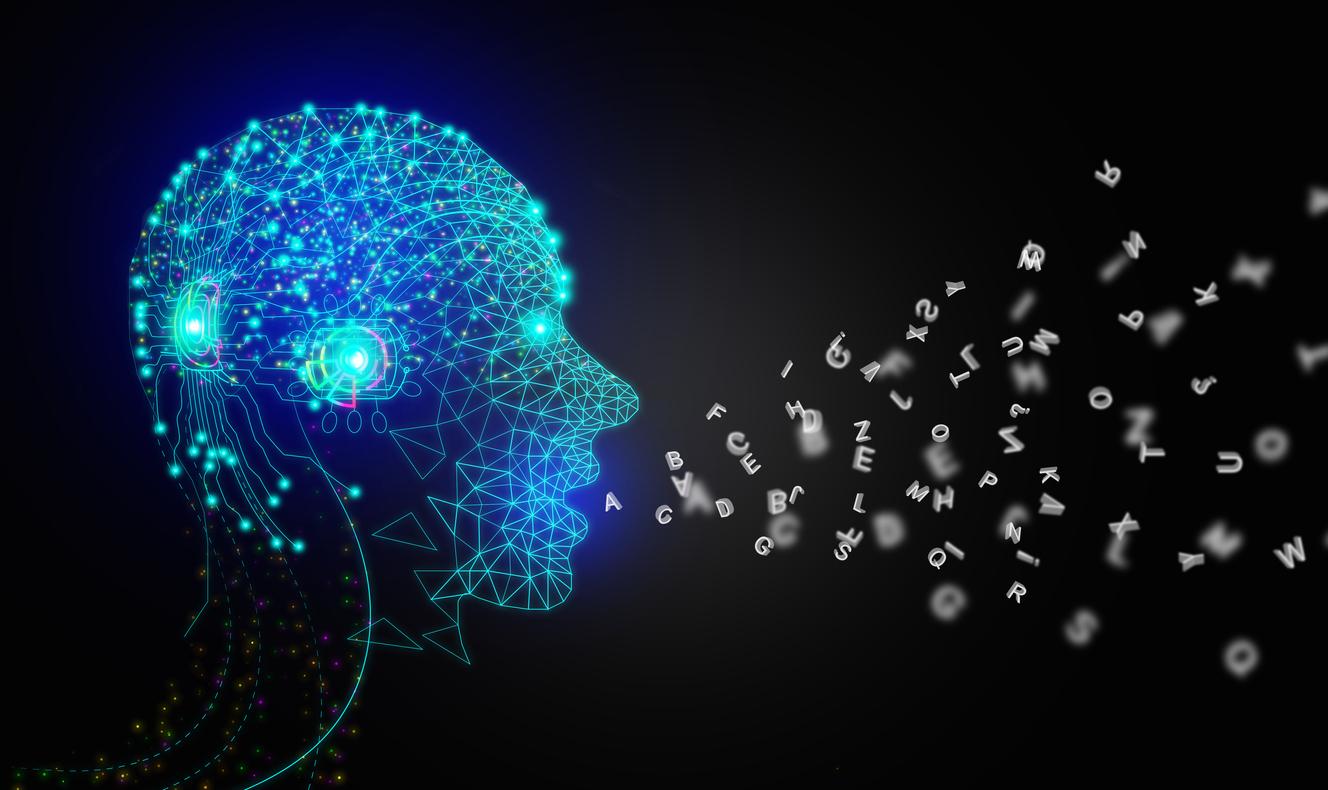

A patient with Charcot’s disease and paralyzed can communicate again thanks to brain implants and artificial intelligence developed by researchers at Stanford University.

- Pat Bennett has Charcot’s disease. The crippling pathology gradually caused him to lose his ability to speak.

- Stanford researchers have developed a device that can translate paralyzed people’s brain signals into words. It is faster and more efficient than previous technologies.

- Encouraged by the first results, the team hopes to be able to improve its product so that paralyzed patients can use it on a daily basis and thus be able to communicate with those around them more easily.

In 2012, Pat Bennett discovered she had amyotrophic lateral sclerosis (ALS), a neurodegenerative condition (also known as Charcot’s disease) that causes progressive paralysis. Which led her to lose her ability to communicate. However, thanks to the work of researchers at Stanford University, this 68-year-old American has regained the ability to express herself through artificial intelligence (AI) and implants.

Implants in the brain to decode signals from speech areas

Stanford researchers have developed a device that is able to translate paralyzed people’s brain signals into words with greater speed and accuracy than previous technologies. They indicate in their article published in Nature that they implanted 4 sensors in the patient’s brain, more specifically in areas related to speech production.

This innovative technology relies on brain circuits that become active when the person tries or thinks to speak. These remain active even if disease or injury prevents the signals from reaching the muscles involved in speech.

AI translates brain activity into words

After four months of learning the software, Pat Bennett’s brain activity was able to be translated into words displayed on a screen at a speed of 62 words per minute, about three times faster than earlier technologies. For example, if she thinks she wants to drink, the device can say “bring me a drink” Or just “I am thirsty”.

“The language model is essentially sophisticated autocorrect”explains Jaimie Henderson who worked on the technology, at the site NPR. “It takes all of these phonemes that have been turned into words, and then decides which of those words are the most appropriate in their context.” The AI has a vocabulary of 125,000 words and has an accuracy rate of over 75%.

AI and paralysis: new hope for patients with speech loss

Although the device has not yet reached a size that allows it to be used at home or in everyday life, researchers are optimistic about its future deployment and its positive impact on the lives of people who have lost the ability to communicate. “This is a proof of concept (proof of concept editor’s note) encouraging”, adds the expert in NPR. “I am convinced that in 5 or 10 years from now we will see these systems appear in homes.“

In the british press, patt Bennett also confided in her hope that she could “maybe continue to work, maintain friendly and family relations” with this new technology.

Indeed, the advances made by the Stanford University team open up new perspectives for people with neurodegenerative pathologies such as ALS. Thanks to this AI, communication could be partially restored, thus offering patients a way to express themselves and interact with those around them.